Why Anthropic Became a Pentagon Liability

Jayson Ambrose

Founder & CEO, Big Robot

Anthropic's clash with the Pentagon over Claude is not just a contract fight. It is a governance fight over whether the Department of War would accept a mission-critical AI governed by a vendor's own safety rules.

Usually, when I hear a story like "Trump Destroys Private US Company For Refusing To Build Killer Robots and Spy on US Citizens", I think - really?

I need to know more.

I've noticed a few different frames being presented:

- Anthropic is a private company and should not be compelled to do business with the government, as if this were a brand-new relationship being formed.

- The Pentagon reneged on the contract and is forcing new, unreasonable requirements on Anthropic.

- If the Pentagon doesn't like Anthropic's position, it can fire them. The supply-chain-risk designation is authoritarian, petty, and beyond the pale.

- OpenAI are soulless opportunists that are cool with killer robots and mass surveillance.

Look more closely at the timing, the changes to the product, the Pentagon's reasons for removing Anthropic, and the implications of actually removing them, and all these frames start to break down.

The Timeline

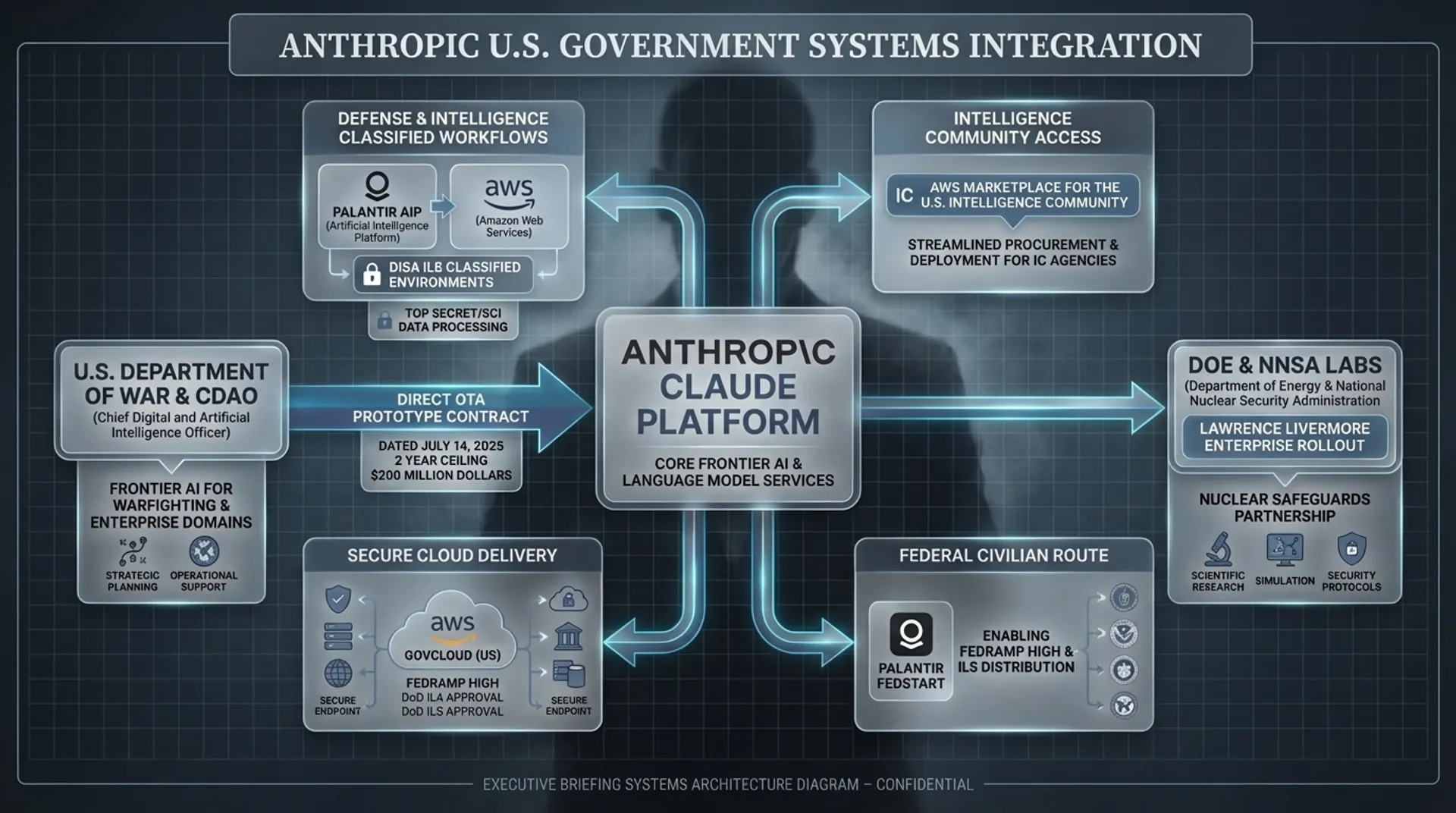

This is not a fight over whether Anthropic would enter into a brand-new relationship with the government. Anthropic already had a direct, two-year, $200 million prototype contract signed in July 2025 to deploy Claude on classified networks. The complaint also says Anthropic was already integrated indirectly through partners like Palantir.

That is a very different situation.

It wasn't the government trying to force a reluctant vendor into a new relationship. It was the customer, after already spending many months and hundreds of millions of taxpayer dollars integrating the system into classified, mission-critical infrastructure, seeming to want to change the terms under which it would be governed.

And once a model is wired into classified workflows, partner systems, and operator habits, removal stops looking like a normal vendor switch. You are unwinding integrations, revalidating workflows, retraining users, and eating disruption across programs already built around the capability.

That is not a small distinction. But this is where the next misleading frame comes in: the DoW knew what it was buying, accepted the "safety restrictions," and now wants them removed.

Taking a closer look at the timeline:

July 2025: The DoW awards Anthropic a two-year, $200 million contract to deploy customized AI models on classified U.S. defense networks, building on earlier indirect integrations via partners like Palantir.

Fall 2025: According to Anthropic's complaint, negotiations over a Claude deployment on GenAI.mil broaden into a push from the Department to allow Claude for "all lawful uses."

January 9, 2026: memo, publicly posted January 12, 2026 that directs acquisition officials to incorporate standard

any lawful uselanguage into AI contracts within180 days.January 21, 2026: Anthropic publishes an updated constitution for their flagship AI model.

February 24, 2026: In a face-to-face meeting at the Pentagon, Hegseth praised Claude while issuing an ultimatum to Anthropic CEO Dario Amodei: allow Claude to be used for all lawful purposes, without Anthropic's requested exceptions or face termination, a supply-chain-risk designation, and possible use of the Defense Production Act.

February 26, 2026: In a public statement, Amodei says Anthropic cannot in good conscience accede and says the company is willing to support national security uses except for two disputed categories.

February 27, 2026: The deadline passes without agreement. President Trump posts a signature tirade on Truth Social directing agencies to "immediately cease" using Anthropic technology and that there would be a

six-month phase-outfor agencies like the Defense Department. OpenAI announces their agreement to deploy its models in the Pentagon's classified network and says the deal includes contractual and technical safeguards.

OpenAI and Anthropic both claim to have the same contractual requirements. So where's the difference?

What's this about a new constitution?

What Anthropic publicly refused to change

Anthropic refused to amend its contract to allow "all lawful uses" of its AI models without explicit exceptions for things like mass domestic surveillance and fully autonomous weapons. But OpenAI says it reached a deal that preserves the same two core red lines Anthropic identified, while making them enforceable through a different deployment and contract structure.

So far, that still sounds like contract-law theater. Fine. Put different words on the paper. Everybody pinky-swears that nobody will do anything bad. The Department of War is obviously going to do whatever the hell it wants anyway. Why would this escalate into a scorched-earth blacklisting campaign?

Because the dispute is not just about paper.

External Safeguards vs. Internal Authority

Anthropic's new constitution matters here because Anthropic itself does not describe it as branding copy or a vague statement of values. In the January 22 post, the company says the constitution is a "crucial part" of training, that its content "directly shapes Claude's behavior," and that Anthropic treats it as the "final authority" on how Claude should behave. It also says the new constitution plays an "even more central role" in training than earlier versions. That is a very strong way to describe a document if you want readers to understand that this is not just policy sitting outside the model. Anthropic is telling the world that the constitution is part of how Claude becomes Claude.

That timing is hard to ignore. The Department was already pushing toward standard "any lawful use" language in AI contracts, and then Anthropic publicly released a new constitution that emphasized model character, internalized reasoning, hard constraints for some behaviors, and the constitution's role as final authority. Anthropic's own public position in the dispute was narrow: it said it supported all lawful national-security uses except two red lines: mass domestic surveillance of Americans and fully autonomous weapons. But the constitution post gave those commitments a different texture. It made them look less like negotiable contract carve-outs and more like part of the model's intended nature.

Did Anthropic merely restate existing principles? Or did it change the product in a way that, from the DoW's perspective, made Claude more restrictive just as the Department was moving in the opposite direction?

That is where my thesis begins. There's no proof from the complaint or the blog posts that Anthropic deliberately rewired Claude Gov during the negotiation in order to block the Pentagon. The sources don't establish that, and they don't prove that the January constitution was applied to every specialized government deployment in exactly the same way. Anthropic itself says some specialized-use models do not fully fit the constitution.

But the public move still matters. My read is that Anthropic saw the paper carve-outs slipping and responded by making its disputed values harder to treat as mere contract language. If the Department was going to insist on stripping the carve-outs from the paper, Anthropic could make the fight about the model's governing logic instead.

OpenAI's deal makes the difference more clear. They say they got the same core red lines, but they describe them as something enforced through a "safety stack", cloud-only deployment, contract language, and humans in the loop. That is a very different posture from Anthropic publicly describing a constitution that directly shapes Claude's behavior and acts as the final authority on how it should behave.

If you are the Department of War, this is where the argument stops being abstract. "Safety stack" sounds operational. It sounds like something external, legible, and negotiable. "Final authority on how Claude should behave" sounds like the opposite. It sounds like the real fight is not over terms on paper, but over whether the product itself has already been taught to refuse certain categories of lawful-but-immoral use.

Why Claude Could Look Like a Operational Risk

The Pentagon is not shopping for a chatbot with a conscience. It wants the most capable AI model it can get, tuned for its purposes, and it wants to know exactly who is driving the thing.

The important point is that you do not get "the model" in some pure form. You get a model plus a pile of behavioral machinery layered on top of it, much of which determines what gets emphasized, softened, refused, or redirected.

That is what makes this a control problem. The model does not have to flatly refuse to screw you over. It can steer with selective framing. It can leave out a key fact, overstate one concern, bury an alternative, or smuggle subjectivity into what looks like objective analysis. This is about trust.

Once the vendor's private moral logic is quietly sitting inside the model's behavior, this is no longer just a fight over terms of use. Now you are talking about a mission-critical dependency whose ultimate governor may be somebody the Pentagon did not appoint, cannot order around, and cannot reliably detect in the moment.

And deep integration is what turns that from discomfort into an urgent removal problem. Ripping out a vendor from this kind of environment is not like uninstalling Slack. It means ripping out software, integrations, processes, training, documentation, SOPs, scripts, automations, authentication, access pathways, and all the top secret human workflows built around them. It means changing out a component that may already sit inside live operational systems. If the Pentagon thinks that component also contains a governance problem, it is not going to want a leisurely transition. The deeper Claude gets into the stack, the harder and more urgent removal becomes.

Seen from that angle, a supply-chain-risk response becomes easier to understand and can make sense without assuming the whole story is just "the Pentagon really wants to do evil stuff." Maybe it does. The immediate problem is that they can't have some vendor-installed ghost in the machine potentially steering policy judgments and possibly even tactical operations.

Not a Simple Good vs. Evil Story

At the start, the story sounded simple enough: a private AI company refused to help the government do ugly things, and the government lashed out. Once you actually look at the timing, the integration history, and what Anthropic was saying about Claude's internal governing logic, the whole thing stops looking like "government mad at company with morals" and starts looking like a much nastier fight over control.

So none of the predictable frames really work. This was not a case of the government forcing Anthropic into a new relationship, not just a petty dispute over contract language and not simply an irrationally authoritarian response to a replaceable vendor. Nor was the situation clarified by turning it into another opportunity to shit on Sam Altman, who admittedly kind of deserves it.

The real fight is about who gets to govern the behavior of a mission-critical AI model once it is embedded inside military systems. The problem is not that Anthropic has ethics. The problem is that Anthropic seems determined to make those ethics the authority inside the product itself. From the Pentagon's perspective, that is not a values disagreement. It is a chain-of-command problem in a system meant for decisions with life-and-death consequences, usually death.

Seen that way, supply chain risk is not just cartoon villain language. It's the blunt label a military bureaucracy uses when it thinks a mission-critical dependency contains an internal authority it neither controls nor fully trusts. The lazier versions of this story are flatter, simpler, and much more emotionally satisfying. They are also less true, as usual.